News

What a difference a day makes

IAES were invited to the Bill and Melinda Gates Foundation HQ in Seattle, Washington, United States, to build awareness of our work.

Camels, Ostriches, and Sheep, Oh My! ISDC Meets in Nairobi in Preparation of Portfolio25 Proposal Reviews

ISDC met in Nairobi in preparation for its forthcoming Portfolio25 independent review of research proposals. This meeting hosted by ILRI gave ISDC an

Connecting with CGIAR researchers in Kenya, whether revolution or evolution, they seek consistency!

Ibtissem Jouini, CGIAR's Senior Evaluation Manager, and Edwin Asare, Research Analyst, unveil insights gathered through consultations with CGIAR

Progress is built on iteration and fertile foundations

IAES have been establishing a fertile bed for our ongoing independent activities to support CGIAR in 2024.

Criteria to Judge Qualitative Research

In this blog, Monica Biradavolu outlines a set of criteria to evaluate the quality of qualitative research and assesses their applicability to

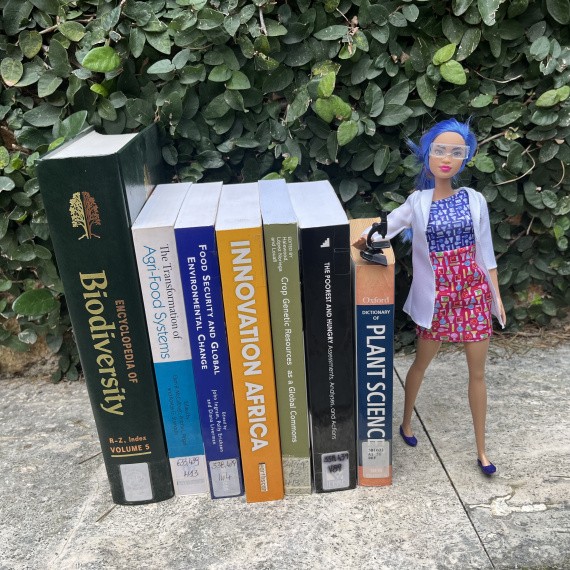

Evaluation on the Ground: Insights from CIP and IITA Genebanks

In a recent interview, we sat down with Sarah Humphrey and David Coombs, co-leads of the 2023 Genebank Platform Evaluation, to delve into the

Vacancy: SPIA Postdoctoral Fellow, Causal Impact Assessment

Apply by 29 February 2024!

New Megatrend Insights for the Research Portfolio

Examining the effect of recent global shocks is timely as CGIAR prepares its 2025-2027 research and innovation portfolio.